Dynamic Graph Structure-Based Point Cloud Complementation for Real Underwater Scene

##plugins.themes.bootstrap3.article.main##

Abstract

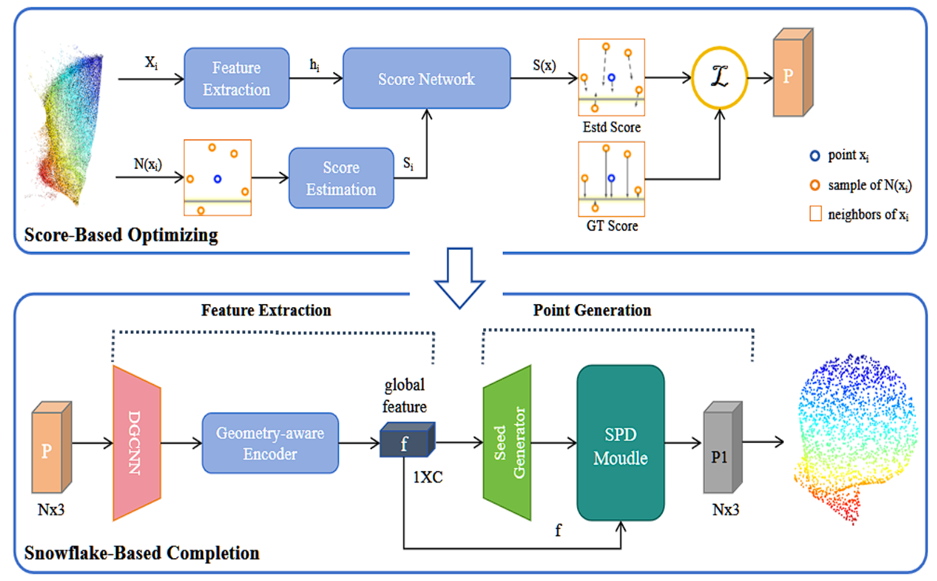

In numerous applications like underwater exploration, object recognition, and navigation, the completion of point clouds holds significant importance. Those point clouds are commonly generated by underwater cameras with multi-view stereoscopy. However, the dramatic negative impact of the underwater environment on visual data leads to a significant drop in the quality of those point clouds, such as noise and incomplete 3D data. To tackle these challenges, this study introduces an innovative network for refining and completing underwater point clouds, named UDPCN. The network is designed to forecast and fill in the missing areas within point clouds in an underwater setting. Through the point cloud optimization module, the proposed technique learns the underlying flow shape of the pristine point cloud and captures the distribution of the subpar point cloud using a score-based model. The global optimization is then conducted by applying the predicted displacement of each point to the origin in the proposed pipeline. We integrate an improved DGCNN into the encoder to perform local feature extraction. Subsequently, the proposed method introduces a geometric perception encoder to extract global features of the point cloud, enabling the comprehensive modeling of structural information and inter-point relationships within point clouds. In order to demonstrate the effectiveness of the proposed approach, experiments and evaluations are conducted with an underwater real-scene dataset constructed by this paper, which is achieved by collecting CAD data using 3D scanners and underwater cameras. The superiority of the proposed method’s performance over several existing methods in real underwater scenes is substantiated through extensive experiments and comparisons.